AI Transformation Patterns: The Playground

27th April 2026 — If you take current AI transformation advice at face value, the work looks done once the tools are bought.

Claude Code licences for the engineers. An agentic workflow layer for everyone else. A Head of AI - hired from a competitor. A Centre of Excellence. A working group reporting to the COO. Quarterly steering committee. Vendor playbooks. Training rolled out by HR. Roadmap items tagged “AI” in the OKR sheet. Decks that measure productivity in hypothetical people-hours saved.

And the business still moves like it did a year ago.

The tooling is not the problem - the tooling is usually fine. What’s missing are the patterns that turn those tools into a business that moves differently.

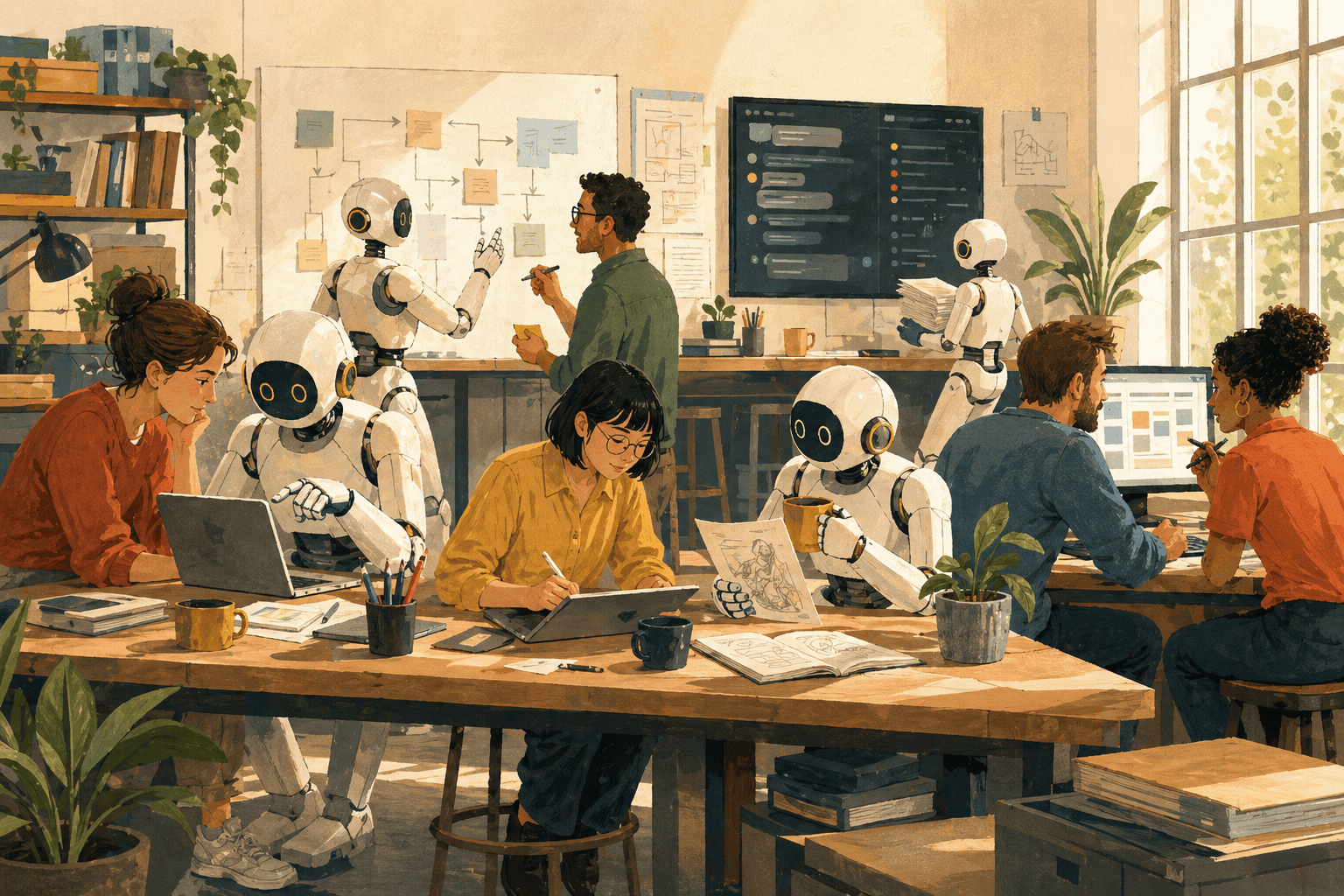

Patterns that build on what AI changed. The first is the simplest, and we’re seeing it already in our most advanced customers.

Letting your people go surfing

Done right, the cost of letting your people innovate on process and tooling has collapsed. A claims adjuster can build a working prototype of a denial-letter assistant by Friday afternoon. An investment-banking associate can take a deal from raw filings to the investment committee by Saturday morning.

The new bottleneck is quickly validating ideas to accelerate the business. What if the procurement team’s vendor-comparison spreadsheet became a chat interface? What if a partner’s deal-segmentation and review framework scaled across the firm overnight? Each is a thesis about how AI could change a slice of how the business operates. No steering committee can answer them. No RFP can answer them. The only way to answer is to try.

Enterprise IT was designed around the opposite bottleneck. The whole machine is calibrated for a world where building anything is expensive, slow and risky - so the rational move is to spend a lot of upstream effort deciding what to build before anyone writes a line of code. Eighteen-month roadmaps. Three-year transformation programmes. Steering committees that meet every six weeks to look at slides. The discipline that built the company is real, and was correctly earned.

The Playground builds that capability.

A safe space to innovate

Inside the traditional enterprise apparatus, certainty has to come before action. A Playground is the place in the enterprise where that wiring is deliberately reversed.

The Playground has two parts. The first is a lab: a venue where someone with an idea can build it without raising a ticket, writing a business case or having to throw it over a wall to a dev team. What makes that real, not aspirational, is upstream work. The lab ships pre-equipped - connectors to the systems of record, scoped tokens, sandboxed copies of the data, key management - provided once, in standard ways, so an individual employee can build against the company’s actual surface without negotiating each dependency. The cost of trying something falls to near zero because the dependencies are already resolved. One person, one afternoon, one prototype that either survives contact with the work or doesn’t.

The second is traction: a way for what got built to find internal users and surface its PMF. In some companies traction comes from a forced cadence - weekly or fortnightly demos, where the work gets shown to the rest of the company. In others, the room is competitive enough that traction surfaces on its own; people see each other’s tools in Slack, ask how it was done, and the good ones spread without anyone running a meeting.

What the builders need, more than budget or permission, is the dopamine hit of seeing their work spread - used, talked about, asked after. That’s the loop that gets them building the next thing. Without traction in some form, people ship into a void - and stop.

Together, the two parts close the loop in public - often enough that the company can feel the gap between what gets tried and what gets kept.

Some Playgrounds are trivial - a shared subscription and a handful of email and calendar connectors. Others, especially in regulated industries, take real engineering to stand up. Whatever the shape, people end up innovating without external dependencies to slow them down or to point at when nothing ships, which makes performance in this regard highly legible - to themselves, their teams, and the rest of the company.

Ramp’s playbook for getting their company “AI pilled” is one of the clearest public accounts of a Playground operating at scale:

Start where the team is

The Playground pattern is the same everywhere; the implementation isn’t. A trading desk full of partners who haven’t touched a command line in fifteen years needs a different lab setup, and a different way to find traction, than a product team that already lives in Git. The two need different stacks, different cadences, different definitions of what built it themselves even means.

Designing the Playground wrong is expensive.

The wrong stack burns the early adopters.

The wrong traction mechanism leaves people shipping into a void.

The wrong cadence convinces the team that the Playground is just another management fad.

AI has collapsed the cost of building so much that what used to take a team now ships from one person - which is what the Playground is built around: one person, one lab, no permissions, no dependencies. What’s left is what’s the right kind of lab to unleash the creativity of a given team - and like the good consultants we are, we have a 3×3 to help.

Two team properties determine the shape: talent density (the team’s average individual quality) and dev tooling fluency (how comfortable the team is operating in engineer-grade surfaces - Git, command line, structured prompts, plaintext over GUI). Each is gradable - low, medium, high - and together they give a 3×3 of nine cells. Stack choice and traction mechanism both fall out of which cell the team sits in.

| Low dev tooling fluency | Medium dev tooling fluency | High dev tooling fluency | |

|---|---|---|---|

| High talent density | VC partners, law firm partners, senior execs at non-tech companies. Sharp, allergic to Git. Chat-based agents over messaging and email surfaces, light cadence - or upskill the team and move right, which is sometimes cheaper than dumbing down the tool. | McKinsey / BCG-style consulting teams. Picks up tools fast but can’t be assumed to live in dev workflows. Hybrid stack, structured prompt libraries, fortnightly demos. | Google, Meta, Box, Ramp product teams. CLI agents and Git native. Full developer-grade collaboration, weekly cadence, public PRs. |

| Medium talent density | Mid-market with strong execs but no engineering culture. Heavily curated tool selection, top-down KPI cadence, enablement budget. | Most “good” enterprises. Middle of the road - pick a stack, standardise, fortnightly demos. | Mid-tier tech companies. Developer-grade tools, but lean on top-down cadence to force visibility. |

| Low talent density | Large legacy bureaucracies. Heavy top-down structure, low-abstraction tooling, slow cadence to absorb each step before moving on. | In-house tech teams at large non-tech companies, where the working stack is older and version control isn’t standard. Top-down KPI cadence, curated tools, heavy enablement; the team can ship valuable Playground work, it just won’t look like PR-merge counts. | Rare; treat as medium. |

The stack choice itself is downstream of dev tooling fluency - low fluency forces a high-abstraction stack (chat-based agents over messaging surfaces); high fluency unlocks the low-abstraction stack (CLI agents over Git). The traction mechanism is downstream of talent density - high talent-density rooms surface traction through competitive social pressure; lower talent-density rooms need a forced cadence to manufacture it.

The high talent-density row has a move the others don’t. VC firms we’re working with have invested in upskilling instead of working around their tool ceiling, and in the last two months at the time of writing, they’re already tracking PR-merge counts across the firm. Most teams pick a cell and design around it. For high talent-density teams, sometimes upskilling the team is cheaper than dumbing down the tool.

Build the right Playground for a team, and the team will produce a queue of validated ideas that move the business - the procurement chat interface, the deal-segmentation framework that travels, the denial-letter assistant. The discovery mechanism works because most don’t land. What survives is the few that do - a queue worth investing in, rather than a steering committee waiting to be presented with shortlists. This is Jevons firing inside the org: cheap-to-try makes latent internal demand for software visible.

Aaron Levie, CEO of Box, has been making the same point in public:

One thing transcends the cells: every team needs traction in some form. Skip the work of figuring out which mechanism produces it for that specific team, and what’s left is a tool subscription that nobody uses to change anything.

Doubling down on the winners

A Playground that’s working produces a queue of validated ideas faster than the rest of the org can absorb them. That’s the point - and also the problem. Most of those ideas are MVPs in the consumer-app sense: speed-to-validation, not robustness, built by an individual iterating to traction over a few days, not someone designing an enterprise-grade solution. So the queue has to lead somewhere. Winning ideas hand off to a pro team that rearchitects them into real software, often most of the way down to the foundations. Without that hand-off, the Playground is a graveyard of clever prototypes nobody can rely on.

The way the Playground avoids that fate is also the way it sorts the people inside it. There have always been many ways to be a high performer in an enterprise - revenue, leadership, depth, deals. The Playground adds another, and makes that contribution far easier to demonstrate: a high-agency employee who can move a business metric, both during the build and after the hand-off, now has a direct, objective way to show it.

Polarising perf reviews is the mechanism. The market is already seeing the same amplification:

Inside the org, that amplification lands in the perf review - top performers get more budget, more responsibility, more authority, and the gap widens. The first dopamine hit gets the next prototype built - work used, talked about, asked after. The deeper one shows up at increment and promotion time, when the people whose ideas moved business metrics are visibly the people whose careers move too. Once that lands publicly, the Playground is self-sustaining.

The Playground earns this by giving up things real production software has to provide. It’s not security-hardened: tokens are scoped, data is sandboxed, but the surface is wider than enterprise security review would normally tolerate. It’s not low-drift: tools change, prompts change, the same prototype rebuilt next month may use a different stack. It’s not SLA-bound: nothing in the Playground is on a pager. These are the boundaries by design - the price of inexpensive, fast, visible and acceptable. The exit gate is where that price gets paid back. Hardening for production is, concretely, adding what the Playground left out: the security posture, the drift control, the operational guarantees. Read this way, the boundaries become the pro team’s spec.

The Nature of Play

When people play, they get hurt. The same properties that make the Playground work - ease of trying, speed of building, the wide on-ramp for anyone with an idea - are the ones that buy you exposure: looser controls, more drift in what’s running, larger surface for things to leak. A retail bank’s Playground will be a thinner instrument than a series-B startup’s, because the bank is paying for blast radius the startup isn’t.

Playing means looking stupid in public, sometimes - shipping a prototype that doesn’t land, demoing a tool that nobody wants, scoring an own goal in front of the team. Most large companies have spent decades training their people to never do that. A culture that punishes public failure shrinks the Playground back into the apparatus that already wasn’t working. The CEOs who treat this seriously model the behaviour from the top. Tobi Lütke is the cleanest public-company example - mandating AI use across Shopify in writing and standing behind it a year later.

Two failure modes are worth naming, because both are quiet ones. The first is what happens without an exit gate: the queue builds up, prototypes accumulate, nothing graduates, and after six months the Playground is a graveyard of clever work with no production reach. The second is what happens without traction: builders ship into a void and stop. Neither failure announces itself. Both look exactly like a Playground that is working, right up until you notice that nothing has changed.

A third failure mode is louder. A bank whose Playground operates without the governance a bank requires is a fire waiting to start. The first leak is the moment it stops being a discovery engine: a compliance breach, a customer-data exposure, a sandboxed dataset that wasn’t sandboxed enough. After that, the board meeting is shorter than usual, and the AI transformation roadmap is delayed by years.

The Source of Innovation

The validated ideas, the people whose careers move with them, the queue that the rest of the org’s apparatus is built to receive - all of it starts here.

Build the Playground and the procurement processes, the steering committees, the eighteen-month roadmaps, the standing organisational machinery you already have starts working on something the business actually wants.

That is the work worth starting with.